AI-Powered Data Standardization: The Ultimate Guide

In today's digital age, data is the lifeblood of organizations. However, raw data can be messy, inconsistent, and unusable without proper standardization. Enter AI-powered data standardization, a revolutionary approach that leverages artificial intelligence to ensure data is clean, consistent, and ready for analysis.

AI-powered data analytics has been a quintessential aspect when it comes to the businesses adopting new-age technologies for growth. Owing to their dynamic algorithms assessing data at multiple levels, a report by MarketsandMarkets states that AI-powered data analytics can improve employee experience by 60% and can further improve the overall business output by 50%. Looking at the benefits, the report states that global AI-powered data analytics market is projected to grow from $25.1 billion in 2023 to $70.2 billion by 2028 with a significant CAGR of 22.8% during the forecast period of 2023-2028.

Understanding AI-Powered Data Standardization

AI-Powered Data Standardization is a transformative approach that leverages the capabilities of artificial intelligence to automate and enhance the process of ensuring data consistency across various sources. It involves using AI technologies such as machine learning algorithms, natural language processing (NLP), and robotic process automation (RPA) to streamline data standardization efforts, making data more reliable, accurate, and ready for analysis.

Data standardization refers to the process of converting data from different formats and sources into a consistent format. This uniformity is crucial for accurate data analysis and reporting, as it eliminates discrepancies and ensures that data from different systems can be easily compared and integrated. AI-powered data standardization takes this a step further by automating the process, reducing manual effort, and significantly improving the speed and accuracy of data standardization tasks.

The Role of AI in Data Management

Automating Data Processing

AI can manage vast amounts of data by automating extraction, transformation, and loading (ETL) processes. This automation significantly reduces the need for manual intervention, minimizes errors, and accelerates data processing times, enabling organizations to handle big data more efficiently and focus on deriving insights rather than data handling.

Enhancing Data Accuracy

AI algorithms are capable of identifying and correcting inaccuracies in datasets. These algorithms learn from patterns and anomalies in data, ensuring that the data is accurate and reliable. Enhanced data accuracy leads to better decision-making and minimizes the risk of costly errors that can arise from poor data quality.

Predictive Analysis

AI-driven predictive analytics use historical data to forecast future trends and outcomes. This capability allows businesses to anticipate market changes, customer behavior, and operational needs. By leveraging predictive analytics, organizations can make proactive decisions, improve strategic planning, and gain a competitive edge in their industry.

Data Integration

AI simplifies the complex task of integrating data from multiple sources. It automatically identifies relationships between disparate datasets and merges them into a unified, consistent format. This seamless integration is crucial for creating comprehensive datasets that provide a holistic view of organizational performance and customer interactions.

Real-Time Data Monitoring

AI systems can monitor data in real-time, providing instant alerts for any anomalies or deviations from expected patterns. This real-time monitoring is essential for detecting issues early, ensuring data integrity, and enabling swift corrective actions, which is particularly important in industries where timely data is critical.

Advanced Data Cleaning

Machine learning algorithms excel at identifying and removing duplicates, inconsistencies, and irrelevant data points. This advanced data cleaning ensures that datasets are accurate, comprehensive, and ready for analysis. Clean data is fundamental for generating trustworthy insights and making informed business decisions.

Natural Language Processing (NLP)

NLP enables AI to process and understand unstructured data from text, emails, and documents. This capability allows organizations to unlock valuable insights from textual data that would otherwise be difficult to analyze. NLP can transform customer feedback, social media posts, and other text data into actionable information.

Data Security

AI enhances data security by continuously monitoring for unusual activities and potential breaches. AI systems can detect patterns that indicate security threats, allowing organizations to respond quickly to protect sensitive information. This proactive approach to data security helps prevent data breaches and ensures compliance with data protection regulations.

Key Tools and Techniques for Data Standardization

Data Standardization Tools

Software such as Talend, Informatica, and Alteryx automate the process of data standardization. These tools provide robust features for data integration, cleansing, and transformation, ensuring that data is consistent and ready for analysis. They significantly reduce manual effort and improve data quality.

Machine Learning Algorithms

Machine learning algorithms identify patterns and anomalies in data, helping to ensure it meets predefined standards. These algorithms continuously learn and adapt, improving their accuracy and effectiveness over time. They are essential for automating data standardization processes and enhancing data quality.

Data Integration Tools

Tools like Apache Nifi and Microsoft Azure Data Factory facilitate the integration of data from various sources. They ensure that data is merged into a consistent format, providing a unified view of organizational data. These tools are crucial for managing data from diverse systems and platforms.

Data Cleansing Tools

Software solutions dedicated to data cleansing identify and correct errors, inconsistencies, and duplicates in datasets. These tools use advanced algorithms to ensure data accuracy and completeness, which is essential for reliable analysis and reporting.

Robotic Process Automation (RPA)

RPA bots automate repetitive data standardization tasks, increasing efficiency and accuracy. RPA can handle tasks such as data entry, validation, and transformation, freeing up human resources for more strategic activities. This automation enhances productivity and reduces the risk of errors.

Data Governance Frameworks

Establishing policies and procedures for data governance ensures that data is managed according to organizational standards and regulatory requirements. A robust data governance framework oversees data quality, security, and compliance, supporting effective data standardization efforts.

ETL Processes

Extract, Transform, Load (ETL) processes are fundamental for data standardization. ETL tools extract data from various sources, transform it into a standard format, and load it into a data warehouse. This ensures that data is consistent and ready for analysis, supporting accurate and efficient decision-making.

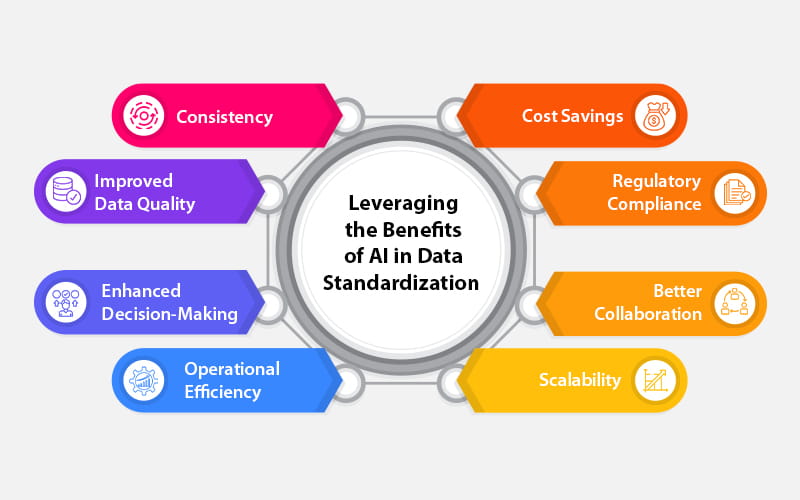

Benefits of Data Standardization

Consistency

Data standardization ensures that data follows a uniform format across different systems and departments. Consistent data is easier to analyze, share, and interpret, leading to more accurate insights and decision-making. Consistency also reduces the risk of errors and misunderstandings caused by data discrepancies.

Improved Data Quality

Standardized data reduces errors and discrepancies, resulting in high-quality datasets. Improved data quality is essential for reliable analysis and reporting, as it ensures that the information used for decision-making is accurate and complete. High-quality data enhances the credibility of insights derived from it.

Enhanced Decision-Making

When data is standardized, it becomes more reliable and easier to analyze. Enhanced decision-making is a direct result of having access to accurate, consistent data that provides a clear picture of business performance, market trends, and customer behavior. This leads to better strategic planning and operational decisions.

Operational Efficiency

Data standardization tools streamline data processes, reducing the time and effort required to manage and analyze data. This increased efficiency allows organizations to allocate resources more effectively, focus on core business activities, and respond more quickly to market changes and operational challenges.

Cost Savings

By reducing the need for manual data cleaning and reformatting, data standardization lowers operational costs. Additionally, accurate data minimizes the risk of making costly decisions based on incorrect information. These cost savings can be significant, freeing up resources for other strategic initiatives.

Regulatory Compliance

Data standardization ensures that data meets legal and industry standards, which is crucial for regulatory compliance. Standardized data simplifies the process of reporting to regulatory bodies, reduces the risk of non-compliance, and helps avoid penalties associated with regulatory breaches.

Better Collaboration

Standardized data facilitates easier sharing and collaboration across different teams and departments. When everyone works with the same data standards, it reduces misunderstandings and errors, leading to more effective teamwork and a unified approach to achieving organizational goals.

Scalability

Standardized data processes are easier to scale as an organization grows. Scalability ensures that data management practices can handle increasing data volumes without compromising quality or consistency. This is vital for maintaining high performance and efficiency in expanding businesses.

Data Standardization Best Practices

Consistency in Data Entry

Ensuring that data is entered consistently across all systems prevents discrepancies and errors. Consistent data entry practices standardize formats and values, reducing the risk of incorrect or incomplete data. This is fundamental for maintaining data quality and reliability.

Standardized Formats

Using standardized formats for common data fields such as dates, addresses, and names ensures uniformity. Standardized formats make data easier to merge, analyze, and compare, reducing the risk of errors and improving overall data quality.

Unique Identifiers

Implementing unique identifiers for entities like customers, products, and transactions avoids duplication and confusion. Unique identifiers ensure that each data point is distinct and easily traceable, which is essential for accurate data management and analysis.

Data Validation

Applying validation rules to data entry processes catches errors and inconsistencies early. Data validation ensures that data meets predefined standards before it is stored, reducing the need for later corrections and improving overall data quality.

Metadata Management

Maintaining detailed metadata for all datasets documents the source, structure, and standards used. Metadata management enhances data traceability, making it easier to understand and use data effectively. It supports data governance and compliance efforts.

Periodic Audits

Conducting periodic data audits ensures ongoing compliance with data standards and identifies areas for improvement. Regular audits verify data accuracy and consistency, helping maintain high data quality and addressing any emerging issues promptly.

Cross-Departmental Collaboration

Encouraging collaboration between departments ensures data standards are understood and followed organization-wide. Cross-departmental collaboration promotes a unified approach to data management, reducing the risk of errors and improving overall data quality.

Continuous Improvement

Regularly reviewing and updating data standards and practices adapts to changing business needs and technological advancements. Continuous improvement ensures that data management practices remain effective and aligned with organizational goals, supporting long-term data quality and reliability.

The Future of AI in Data Standardization

Advanced Machine Learning

Continuous advancements in machine learning will enhance the accuracy and efficiency of data standardization processes. More sophisticated algorithms will improve pattern recognition and anomaly detection, making data standardization more effective and reducing the need for manual intervention.

AI-Driven Automation

Increased reliance on AI for automating complex data standardization tasks will reduce the need for human oversight. AI-driven automation will handle larger datasets more efficiently, ensuring consistent and accurate data across the organization. This will lead to significant improvements in data management practices.

Real-Time Data Standardization

AI will enable real-time data standardization, ensuring that data is immediately consistent and usable upon entry. Real-time standardization will support faster decision-making and more responsive business operations, providing a competitive edge in dynamic markets.

Integration with IoT

AI will standardize data from IoT devices, ensuring that the massive volumes of data generated are clean and consistent. This integration will support better analysis and utilization of IoT data, enabling smarter and more efficient operations across various industries.

Enhanced Data Security

AI will play a critical role in identifying and mitigating data security risks, ensuring that standardized data remains protected. Advanced AI systems will detect and respond to security threats more effectively, safeguarding sensitive information and ensuring compliance with data protection regulations.

Predictive Data Quality

AI will predict potential data quality issues before they occur, allowing for proactive data standardization. Predictive analytics will identify trends and anomalies that could impact data quality, enabling organizations to address issues early and maintain high data standards.

Scalable AI Solutions

AI-driven data standardization solutions will become more scalable, catering to the growing data needs of large organizations and industries. Scalable solutions will ensure that data management practices can handle increasing volumes and complexity without compromising quality or efficiency, supporting long-term business growth.

Conclusion

AI-powered data standardization is a game changer in the realm of data management. By automating the standardization process, improving data quality, and ensuring regulatory compliance, AI enables organizations to harness the full potential of their data. As we look to the future, the continuous advancements in AI technologies promise even more efficient and effective data standardization solutions, driving better decision-making and operational efficiency.

By understanding and leveraging the power of AI in data standardization, organizations can ensure their data is accurate, consistent, and ready to drive meaningful insights and business growth. To know more about the implementation of AI and its impact on your data management, connect with our experts today.